Connected Apps Are Being Retired in Salesforce

Starting with Spring ’26, Salesforce disabled the creation of new Connected Apps by default across all orgs. Your existing ones still work for now. But the direction is clear: External Client Apps are the future, and the clock is running. This guide covers what changed, why Salesforce made this move, what External Client Apps actually give you, which apps are and are not affected, and a step-by-step migration walkthrough through App Manager. The second half is a structured checklist for the audit before you migrate and the testing after. The TLS certificate changes happening in the same release are covered at the end, because both come from the same security-first motivation. Spring ’26 Migration Timeline & TLS Certificate Phase-Down Connected Apps → External Client Apps 1 Winter ’26 New Connected App creation disabled by default in new orgs Opt-in 2 Spring ’26 New creation disabled across all orgs — Support request required Enforced 3 Summer ’26 Expected enforcement deadline — plan migration before this Deadline 4 Summer ’26 Triple DES for SAML SSO stops working completely Hard Stop TLS Certificate Lifespan Phase-Down (CA-signed certificates only) A Until Mar 14, 2026 Max lifespan 398 days (previous standard) B Mar 15, 2026 Max lifespan drops to 200 days Now C Mar 15, 2027 Max lifespan drops to 100 days Plan for D Mar 15, 2029 Max lifespan drops to 47 days — automation required Automate Why Salesforce is making this change Connected Apps have been part of Salesforce for over a decade. If you have ever set up OAuth for a third-party integration, configured Data Loader, or connected a custom web application to the Salesforce API, you have used one. They are everywhere, and most of them work fine. The problem is not the apps that are configured properly. The problem is the ones that are not, and the architecture that makes unsafe configurations easy to create. By default, Connected Apps allow any API-enabled user in an org to self-authorise a connection to an external application, without admin approval. That is how phishing and vishing attacks that targeted Salesforce orgs worked: trick a user into authorising a malicious Connected App, and the attacker has API access to the org’s data. Salesforce responded by tightening this behaviour, but the structural issue remained. External Client Apps take a different starting position. They adopt a closed security posture by default. Access is not granted unless an administrator explicitly permits it. Furthermore, the architecture separates developer configuration from admin policy, which means a developer building an integration cannot inadvertently override security settings that the admin put in place. What changed in Spring ’26, specifically The rollout has been gradual. In Winter ’26, Salesforce disabled Connected App creation by default in new orgs, with an option for admins to re-enable it manually. Spring ’26 tightened that further: new Connected App creation is now disabled across all orgs, including existing ones. Getting the ability back requires a Salesforce Support request, and Salesforce has been clear that this option will eventually disappear entirely. Two categories of Connected Apps are not affected by this change. Connected Apps created as part of a managed package continue to work and can still be created in that context. Connected Apps used for Slack in the legacy Agentforce Builder are also excluded. Everything else follows the new default. Any new integration from Spring ’26 onward must be built as an External Client App. ⚠ What happens if you try to create a new Connected App without a Support request The New Connected App button no longer appears in App Manager — the option is gone from the UI entirely. Attempting to create one via the Metadata API returns an error. There is no workaround inside the platform. Any new integration built after Spring ’26 that requires its own OAuth client must use an External Client App instead. If a specific business case genuinely requires a new Connected App, you must open a Salesforce Support case and explicitly request the capability. Salesforce may or may not grant it, and this option will be removed in a future release. What External Client Apps offer that Connected Apps do not External Client Apps are not just Connected Apps with a new name. The differences are architectural, and several of them matter a lot depending on how your org is set up. Separation of developer settings and admin policies In a Connected App, the developer configuration and admin security policies live in the same record. A developer with edit access to the Connected App can change the OAuth scopes, the IP restrictions, and the session policies. In an External Client App, those roles are separated. Developers manage the technical settings; admins manage the access policies. Neither can override the other without the appropriate permission. For ISVs and AppExchange partners, this matters enormously. It means the admin installing your package can control how it behaves in their org without touching the underlying app configuration. Second-generation packaging support External Client Apps are designed specifically for second-generation packaging, or 2GP. Connected Apps technically work with 2GP, but the process required manual steps that were fragile and time-consuming. ECAs package cleanly, distribute correctly, and integrate naturally with source control and CI/CD pipelines via the Metadata API. For admins managing integrations rather than building packages, the practical difference is that ECAs behave more predictably in sandboxes and scratch orgs. Scratch Org support for External Client Apps was added in Spring ’26, which makes the developer testing cycle considerably cleaner. Closed security posture by default A new External Client App is not accessible to any user until an administrator explicitly grants access. There is no self-authorisation pathway by default. This is the core security difference from Connected Apps, and it is the reason Salesforce is moving in this direction. What ECAs do not yet support Two important gaps remain. External Client Apps do not support the Username-Password OAuth flow. If any of your existing Connected Apps use this flow, you cannot migrate them

Salesforce Pipeline Accuracy For SaaS Companies

Your Salesforce pipeline shows $400K. Your actual closeable pipeline is probably closer to $180K. That difference is not a forecasting error. It is a structural problem that most SaaS teams at the 20 to 50 person stage have — and most do not catch it until a board meeting goes badly. There are three specific patterns that create what we call phantom pipeline. They are not exotic edge cases. They show up in almost every SaaS org we look at between 20 and 50 people. Here is what they are. What your dashboard shows $400K Pipeline total across all open opportunities ✗Deals with no activity in 60+ days ✗Contacts who stopped replying in March ✗Renewals with no opportunity attached ✗Accounts with zero product usage What you are actually working with $180K Active, contactable, closeable this quarter ✓Activity logged within last 30 days ✓Contact responded within last 14 days ✓Renewal opportunity created and staged ✓Product usage confirms active engagement 1. Dead deals that nobody closed There are opportunities in your Salesforce right now that have not moved in 90 days. They are still sitting at Stage 3 or Stage 4, still counting toward the pipeline total, and nobody is touching them. Reps do not close them because closing a deal as lost hurts their quota attainment number. Managers do not push because the conversation is uncomfortable. So the deal sits there, neither alive nor dead, just inflating the number. In practice, this means your pipeline report is showing revenue from opportunities that have a near-zero probability of closing this quarter. The reps know it. The managers suspect it. The dashboard does not. A deal with no activity in 30 days and no reply in 14 days is not in your pipeline. It is in your wish list. Those are different things. 2. Renewals that are not tracked at all At a SaaS company, renewal revenue is often more predictable than new business — but only if someone is actually tracking it. Most orgs at this stage have no renewal opportunity objects, no renewal stage, and no alert when a contract is 60 days out. What happens instead: the CS team finds out it is renewal time when the client asks why they were auto-charged. Or worse, the client reaches out to say they want to cancel, and that is the first time anyone internally knew the renewal was approaching. That is not a process. That is luck. And it means that a significant portion of your actual annual recurring revenue — the revenue that should be the most predictable number in your business — is completely invisible in the tool you use to run sales. When renewal ARR is not in the pipeline, two things happen. Forecasts are wrong. And at-risk accounts are not identified in time to do anything about them. 3. Product data that never reaches the CRM Your product knows who is logging in every day. It knows who activated three core features last week and who has not touched the app in six weeks. That information exists somewhere in your stack — in Mixpanel, Amplitude, Pendo, or whatever analytics tool you use. Your CRM has no idea. Consequently, reps spend time calling accounts that are completely disengaged, because those accounts have an open opportunity at Stage 2. Meanwhile, accounts that are thriving, using the product heavily, and expanding their team are getting no expansion outreach because nothing in Salesforce flags them as a priority. The most valuable signal in a SaaS business — actual product behavior — is entirely absent from the tool where selling happens. So the pipeline that shows up in your forecast is built on CRM activity and deal stages, not on what your customers are actually doing. 1 Dead deals nobody closed Opportunities stalled for 90 days are still in the pipeline because closing them hurts quota. Reps avoid it. Managers avoid it. The dashboard keeps counting them. No activity in 30+ days = not in your pipeline 2 Renewals with no opportunity object No renewal stage, no contract expiry alert, no tracking. The CS team finds out it is renewal time when the client asks about the auto-charge. That is not a process. No renewal object = invisible ARR risk 3 Product data that never reaches the CRM Who logs in daily, who activated core features, who has not touched the app in six weeks — all of this exists in your analytics stack and none of it is in Salesforce. Product behavior invisible to reps = wrong priorities The question worth asking today Pull up your pipeline right now. Then apply three filters: Remove every deal with no activity logged in the last 30 days. Remove every deal where no contact has responded in the last 14 days. Remove every account expiring in the next 90 days that does not have a renewal opportunity attached. What number are you left with? For most SaaS teams at this stage, the answer is significantly lower than what the dashboard shows. And knowing the real number — even if it is uncomfortable — is always better than being optimistic about the wrong one. You cannot fix a problem you cannot see. The pipeline reality check Pull up your pipeline right now and apply these three filters ✗ Remove every deal with no activity logged in the last 30 days ✗ Remove every deal where no contact has responded in the last 14 days ✗ Remove every account expiring in 90 days with no renewal opportunity attached What number are you left with? For most SaaS teams at 20–50 people, the answer is significantly lower than the dashboard. Knowing the real number is always better than being optimistic about the wrong one. Phantom pipeline is not a Salesforce problem. It is a process problem that Salesforce happens to be hiding very effectively. These three patterns show up in almost every SaaS org we talk to between 20 and 50 people. If you want to

Agentforce Sales 2026 Is Live: What Every Director Needs to Know

Saleforce just gave every sales rep a digital coworker that never sleeps, never forgets a follow-up, and never loses a lead.

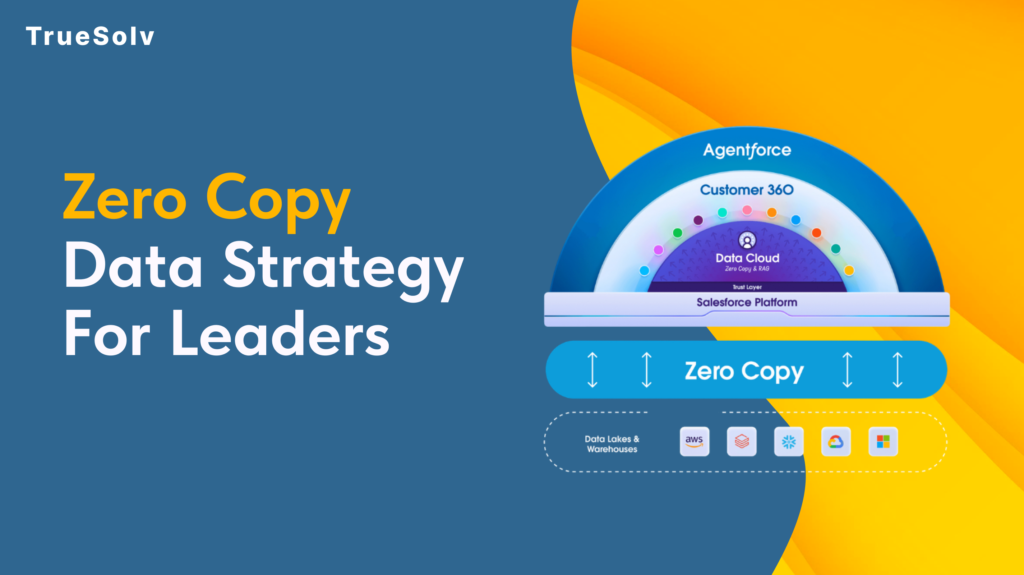

Zero Copy Data Strategy For Salesforce Leaders

Your data pipeline costs are high because duplication is still the default Moving data feels like progress. Pipelines get built, jobs get scheduled, dashboards get populated. Then the bills arrive and the numbers on those dashboards are still two hours old. Zero Copy is Salesforce’s answer to that pattern. The concept is straightforward: query the data where it lives instead of copying it somewhere else first. The strategic implications for how organisations manage their data estate are considerably less straightforward, and that is what leaders need to understand before committing to a rollout. What Zero Copy changes for cost and speed Traditional data integration between a warehouse like Snowflake or BigQuery and a platform like Salesforce has followed the same basic model for years. Extract data from the source, transform it, load it into the destination, keep the sync job running, fix it when it breaks, reconcile the drift when numbers do not match. Every copy is a maintenance obligation. Zero Copy replaces that model with direct federation. Salesforce Data 360 connects to the external system and sends queries against the data where it already lives. The results come back without a copy of the underlying data ever moving to a new location. When the source data changes, the next query reflects that change immediately. The cost reduction argument operates on two levels. Storage costs drop because duplicate datasets are eliminated. Engineering costs drop because the sync pipelines, the error handling, the reconciliation processes, and the monitoring overhead that comes with them no longer need to exist. For organisations running multiple integration pipelines into Salesforce, that engineering overhead is more significant than the storage bill. On speed, the practical outcome depends heavily on where data physically sits relative to where the query runs. Data 360 uses advanced query pushdown, which delegates computation back to the originating warehouse rather than pulling raw data across and processing it in Salesforce. When the data and the compute are in the same cloud region, this is fast. When they are not, the cross-region transfer introduces the latency that Zero Copy was supposed to eliminate. Use cases that work well Zero Copy performs well in specific scenarios and those scenarios share common characteristics. Operational reporting where freshness matters. If a revenue dashboard, a service queue metric, or an account health score needs to reflect what happened in the last fifteen minutes rather than the last sync cycle, federating from the warehouse eliminates the lag. The data is always current because it is never a copy. Large reference datasets that would be expensive to replicate. Product catalogues, entitlement records, historical transaction data, enrichment datasets from third-party providers. These are large, they change infrequently at the record level, and they are expensive to maintain as copies. Federating them into Data 360 for use in segmentation and identity resolution keeps the warehouse as the source of truth without duplicating the storage cost. AI and agent workloads requiring real-time context. Agentforce and Einstein features fed by stale copied data produce outputs that reflect the past rather than the present. Zero Copy allows AI features to operate against live warehouse data, which meaningfully changes the quality of the output in time-sensitive interactions such as service escalations or dynamic pricing decisions. Bidirectional insight sharing. Zero Copy is not only inbound. Data 360 can share unified customer profiles, segmentation outputs, and AI-generated insights back to the warehouse without replication. Teams that need Salesforce-derived insights in their BI tools or data science environments get those outputs written back to Snowflake or BigQuery without another pipeline layer. Security and access implications Zero Copy changes the security model in ways that require deliberate attention before deployment. With traditional ingestion, access control is applied when data arrives in Salesforce. The ingested dataset can be governed independently of the source. With Zero Copy, access control lives at the source. The permissions set in Snowflake, BigQuery, or the relevant warehouse determine what Salesforce can see. If those permissions are broad, the federation inherits that breadth. The implication for leaders is that permission mapping needs to happen before Zero Copy goes live, not after. Which tables and views is Data 360 authorised to query. Which fields within those tables. Which profiles or roles within Salesforce can access the federated data once it appears in the platform. These questions have answers that sit across two systems, and the governance model needs to account for both. PII handling deserves specific attention. One of the stated benefits of Zero Copy is that personally identifiable information stays in its original governed environment rather than being duplicated into a new location. That is accurate, but it does not reduce the compliance obligation. If GDPR, HIPAA, or any other regulatory framework applies to the data in the warehouse, federating it into Salesforce does not change what those obligations require. Compliance teams should be part of the Zero Copy governance conversation from the beginning. Salesforce provides Private Connect for Data 360, which allows federating from warehouse environments locked within a private cloud network. For organisations with strict network isolation requirements, this is the relevant configuration to understand before assuming Zero Copy requires exposing source systems to the public internet. Implementation checklist and governance Before a Zero Copy rollout, the following decisions should be made explicitly rather than discovered during deployment. Identify the use cases. List the specific reporting, segmentation, or AI scenarios that will use federated data and confirm that Zero Copy fits each one based on the criteria above. Audit the source data. Assess data quality, field naming conventions, and data type handling in the warehouse before connecting it to Data 360. Quality problems in the source appear directly in the federation. Map permissions before connecting. Define exactly which tables, views, and fields Data 360 is authorised to access. Do not default to broad warehouse permissions because the connection is easier to configure that way. Confirm cloud region alignment. Verify that Data 360 infrastructure and the warehouse are in the same cloud region. Cross-region

Salesforce Health Check service for secure CRM

Find security gaps, slow automation, dirty data, and brittle integrations, then fix them with a Salesforce Health Check by TrueSolv.